Listening to 1984: An Experiment in Time Travel - Part 1 of 12

I spent the year 2020 living in the year 1984. Weirdness ensued. I kept a journal.

(Note: These journal entries from 2020 have been edited and, in some places, expanded. However, I have done my best to honor my in-the-moment impressions from that strange time—along with my impressions of 1984 as it re-unfolded—which are not always the same as my feelings from the vantage point of 2024. Yeah, it’s complicated and, as they say on Doctor Who, “timey-wimey.”)

It’s times like these when I’m alone, I miss the Iron Curtain

-Josh Rouse

January 1, 1984 / January 1, 2020

There is no dramatic landing of my time-travel ship, no wheezing and groaning as the fibers of space-time are rent asunder, no blue police box materializing on a street corner a la Doctor Who, just a slow, unsteady fading-in, an awareness of an overcast New Year’s Day in Minneapolis with the possibility of scattered flurries in the afternoon.

A flickering back-and-forth. Here I am, pen poised over a journal in 2020, enjoying the moderate warmth of a “winter” morning in Tempe, Arizona; a 45-year-old father of two, an accidental participant, via my daytime employment, in the tech revolution; a reasonably happy middle-aged man in love with my small corner of life but bewildered by the world.

Simultaneously I am nine years old, sharing a second-floor bedroom—and a bunkbed—with my red-haired six-year-old brother Daniel. There is thick, webbed frost on the windowpane near my head, and a grey light filtering in through the beige down-drawn shades, illuminating the honeycombed design of our wallpaper. Behind the walls, pipes creak and groan as the gas heat struggles to fill our 65-year-old home and afford us some protection from the 22-degree Fahrenheit cold outside.

While my adult self is adrift in the contemporary world, my nine-year-old avatar remains oblivious to the volatility outside these 1984 walls: the overnight military coup in Nigeria; the growing anxiety over the U.S. military’s continued presence in Lebanon; and the emerging, still barely understood virus that is just beginning to register in the public consciousness.

It is 6:30 AM, so my younger self is probably still asleep. I live in the cocoon of family and childhood whimsy (I speak of my younger self… I think.), sheltered in all senses—and this is a good thing, a gift.

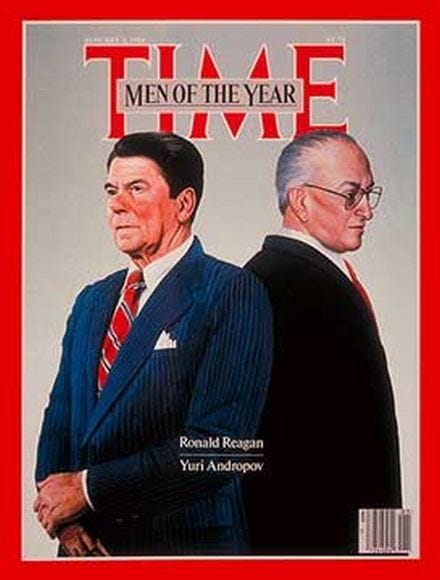

Of that outside world, I know that the President of the United States is a gentle-voiced man who reminds me of my grandparents but with better hair. I am vaguely aware that some people, including my mother, are irritated by him—perhaps because, like a grandparent, he does not always make sense. And like a grandparent—or like 45-year-old me, for that matter—he seems caught between two timestreams approximately four decades apart: in his case the hustle-bustle of 1984 and the gauzier, myth-heavy world of 1940s Hollywood. Through force of personality, he has grafted some of the glamor of that time onto the present day.

One off-note: When he raises his voice in anger, he resembles a wrathful Mr. Rogers.

I can already see that this discussion of eras, of timestreams, is going to become confusing, as is this delineation between my nine-year-old perspective and my theoretically expanded 45-year-old consciousness. And my use of present vs. past tense to describe, erm, the past will be willfully inconsistent. But let’s give it a reference point. I am, at certain moments, the inverse of Tom Hanks in Big (a movie that, from a 1984 standpoint, is four years away). My 45-year-old self is inhabiting my nine-year-old body, taking in the world of 1984 in real time with the perspective of all that I have learned since then. But at other moments I am looking back and remembering (or commenting) in the more conventional sense. Yeah, I know… we’re winging it here. But it’s a journal.

So— why am I doing this? It’s an impulse. It feels like the thing to do in 2020 is to stay out of 2020. I can point to a number of factors that are driving me from the present, chief among these that the 2020 election is likely to be the most obnoxious of my lifetime. That’s not a hard prediction to make, given the personalities involved and the fever pitch of the culture war. I don’t have a lot to lose by tuning out. Maybe at its root this is a move of self-preservation, of mental regrouping. I suppose it’s called a midlife crisis.

So, I want to leave the present. The question that follows is: Where to go? Or rather, when to go? Why not the 1980s, and specifically, 1984—my favorite year of that decade? Ever since the ‘80s ended, tastemakers have been working feverishly to explain what it all meant. I have always been suspicious of their conclusions. Just as the popular portrayal of 1967 as an acid-saturated year of free love, political protest, and mystical awakening ignores the more varied moods of the world beyond the San Francisco Bay area and certain fashionable parts of London, so too does the portrayal of 1980s America as a time of greed, selfishness, jingoism and superficiality strike me as myopic, an easy-to-grasp caricature of something that was more dynamic.

And my own memory may also be an offender here. Even though it is at variance with that broad-stroked view, it too is narrowly focused and shrouded with myth. The question that nags is: What did I miss?

January 2, 1984 / January 2, 2020

Some revelations while looking over Time magazine. I had buried the memory of cars designed to look as if they are built out of wood. But here in the Jan. 2 issue is a two-page spread on the new Plymouth Voyager: “The Magic Wagon—You’ve got to drive it to believe it.” That’s Gallagher, I believe, emerging from a puff of smoke next to this curious wood-paneled proto-SUV. (2024 Editor’s note: turns out it’s Doug Henning). I have to admit that something about the beige interior pulls on the heartstrings. I mean that in all seriousness. I believe that Dad’s black Buick, the one with the 8-track deck and the ever-present cartridges of The Beatles’ Hey Jude and Kenny Rogers’ 10 Years of Gold had such an interior: soft, warm, comforting. As soothing to the soul and as alien to modern eyes as a wood-paneled den. Well, to hell with the naysayers. These are formative influences and I will not be cowed into renouncing them!

Another revelation: even with all of the automobile and cigarette ads, a weekly issue of Time in 1984 is as dense with content as a month’s worth of the New York Times in 2020—or feels that way. Look at the size of these articles—page after page after page, drilling down into the minutiae of government deliberations, business news, and the latest on Broadway, and just when you think you’re nearing the end, you’re really just running up against another cigarette ad; there’s more article on the flipside. And this is Time. What the hell does the New Yorker look like in 1984?

I try to read magazines in 2020. They are to this what Dr. Seuss is to Tolstoy.

Final revelation: Turns out the preceding year, 1983, was terrifying. I had remembered it as the year of Return of the Jedi. But internationally it was fraught with conflict and tragedy: the shooting down of a Korean Airlines flight; the bombing of the U.S. Marines in Lebanon; the U.S. invasion of Grenada. All of this registers in memory faintly—as furtive chatter from grown-up land. To the child that is/was me, they are nightmares confined to a screen—or, now, the printed page. But revisiting these events, understanding them more, I transfer some of the anxious energy of post-millennium atrocity into this parallel timestream—for parallel it is. 1984 is happening now, not then; I’ve set it in motion. You’ll see.

January 6, 1984 / January 6, 2020

In 1984, Minneapolis is in the throes of one of the coldest winters on record, coming out of a December that saw the Mississippi River freeze over. We leave the hot water running sometimes to keep the pipes from bursting.

Now a rapid-fire burst of images: Dad warming up the car for 30 mins before hitting the road. And there’s the cheap plastic ice scraper. Is this the best kind of scraper available? The gist is you attack the ridges of ice on the windshield as if they’re plaque, and if you’re lucky you’ll chip off some pieces at the edge or explode some of it into a fine mist. And it takes for-freaking-ever. Snowplows glide up and down the street churning up massive snow piles that we sled down or burrow into. Then the brownish grey sludge takes hold of the snow’s edges, as, later on, the street salting takes effect.

I will read about the symptoms of hypothermia in Boy’s Life: the deceptive feeling of warmth and tiredness that sets in, a siren’s call to remain outside and sink into a pillow of rest. I will on occasion feel the first stirrings of that call. I read—there and elsewhere—about how a case of frostbite can recur for the rest of one’s life.

More images: yellow plastic snow brick makers, which can be used to build igloos and snow castles. My siblings and I build trenches, caves, and massive walls of snow brick.

I was happy. And most significantly, I was aware that I was happy. I remember sitting in my bedroom and realizing that I would someday look back on this moment as the past. I tried to document it for my future self. This was the era that I got my first tape recorder—a black, single-speaker Panasonic with large push-down buttons and a carrying handle—and made amateur radio shows; the era of creating comic books (“D&D” for “Dean and Dustin”). The tapes are long gone—I think? But the comics survive, testaments to my tendency, in childhood and beyond, toward dictatorship: Apart from the egalitarian name of our publishing venture, my neighbor Dustin’s creative fingerprints are nowhere to be found in the enterprise.

The comics are testaments, also, to my childhood terror of girls: The adventures of my recurring character, Secret Agent 13, occur in an almost exclusively male universe, a universe of action and monasticism. It’s the universe, come to think of it, of Boy’s Life.

When I got my driver’s license at 17, it became clear that there was a sharp delineation between the childhood wonderland of a Minnesota winter and the harsh reality of the adult experience: putting chains on tires, shoveling snow, changing out the storm windows on the house, fussing with the furnace. No, I don’t miss any of that. In Arizona we are occasionally called upon to put blankets over our plants on cold nights.

My friend T. Kyle King mentioned recently that the year 1984 is as far in the past for us now (in 2020) as it was in the future when Orwell was writing his novel. For Orwell it was a fixed point of dread. For me it is the epicenter of my nostalgia. Orwell’s novel seems, to me, more appropriate to 2020 than to the actual year 1984. Not surprisingly, the media of that actual year is filled with musings on Orwell’s “year zero” having come to pass. One Harris poll, cited by Alistair Cooke, cites growing unease about the encroachment of technology—especially its surveillance aspects—on peoples’ everyday lives. A prescient observation. It appears to me that we were (apparently) wise to the threat, but we let it happen anyway. If anything, we threw open our arms.

January 7, 1984 / January 7, 2020

Some misconceptions are going out the window as I continue down this path. The 1980s were not a safer or more secure time than the present day. There was a widespread perception at the end of 1983 of a world in peril. Both the general public and many experts believed that nuclear war was a real possibility within the decade, and this was accompanied by a feeling of powerlessness. Two made-for-TV movies of that year, The Day After and Special Bulletin, struck a nerve with their depictions of the hypothetical nuclear fallout. And in the UK, the BBC staged a radio adaptation of Raymond Briggs’ nuclear war-themed graphic novel When the Wind Blows that stands as one of the most powerful and unsettling works of “entertainment” I have ever come across.

I was shielded from these fears, and for that I thank my parents. It may be that in pining for the “better time” of the 1980s, I have simply been pining for a happy childhood. People who were adults in the 1980s will probably have a different view of the period than I do. Makes me wonder: Is there a young adult out there somewhere pining for those halcyon days of the early 2000s, when good and evil where more clearly delineated, shoe bombers were easily identifiable, and Nelly and DMX were riding the charts?

As hard as I try, it’s difficult to make a 1980’s-level appraisal of Ronald Reagan. My time-traveler’s knowledge of how everything played out—of the later emergence of a Soviet leader named Gorbachev, and the winding down of the Cold War—mutes the alarm I might feel at the late 1983-early 1984 brinkmanship of Reagan and Andropov. Given my general discomfort with conflict, and given the existential stakes of this conflict, I imagine that I would have been both terrified and angry. I don’t know that I would have grasped Reagan’s long game, if indeed there was one.

Despite all that, can I say in a nonpartisan way that I miss Reagan? I miss his style, his seeming adroitness, his generosity toward his political opponents. I miss his optimism and what George H.W. Bush called “the vision thing.” And I miss the glamour of the Reagan presidency. In my lifetime, there have been just two presidencies steeped in pageantry, and they were political opposites: Reagan and Obama. And I suspect that these are the two that future historians will give the most attention to.

There is the added allure of Reagan representing the generation of my grandparents: the so-called “Greatest Generation.” He doesn’t resemble either of my grandfathers precisely, and he didn’t serve overseas during World War II, but there is a mode of presentation, of mannerisms and humor, that is particular to their era. These were people who were, generally speaking, fastidious in their personal appearance, who valued well-made clothes, good grooming, clear and precise speech. In a very real sense, they made a world and they owned it for a long time.

But of course the 1980s were not the 1940s and ‘50s, and so this President Reagan—seemingly unchanged from the Golden Age of Hollywood—was an exotic bird, so steadfastly out-of-step as to be both an object of ridicule and awe. As a man, father, and political thinker he may have had substantial flaws, but through him that era of American life asserted itself for the very last time, and part of that final assertion would be the sunsetting of one of its most complicated legacies: the Cold War.

I am aware that my feelings are more grounded in myth and nostalgia than day-to-day reality. But myths are not nothing. As time marches on, the myths remain, gather weight, and may ultimately move the world. And here was a president forged in the cradle of mythmaking. Which bowled Gorbachev over more, I wonder: tales of Erroll Flynn and William Holden or bullet-points from The Conscience of a Conservative? Ah, but we’re getting ahead of ourselves.

Amidst all these myths, there is one tangible event that has been oddly lost to posterity, or at least to my awareness, and here we shall shift back to present tense as I watch it unfold: In early January 1984, the Reverend Jesse Jackson, then a trailing candidate for the Democratic presidential nomination, somehow effects the release of an African-American fighter pilot who had been held hostage by the Syrian regime during the ongoing Lebanon crisis. How did this happen and why hasn’t Jackson rocketed to the front of the Democratic race as a result? Is the country not yet ready for a black presidential candidate? Or are there deeper issues with this particular candidate? Certainly, elements of theater and artifice have always been present in Jackson, but the same is true of the sitting president.

I am impressed by Regan’s response to Jackson’s achievement: elation and the statement that “You can’t argue with success.” I suppose he can afford to be generous. Per Alistair Cooke’s report, no one seriously considers Jackson a contender for the nomination, even after such a coup. But why? Many years hence, participation in reality TV, of all things, will be enough to elevate a candidate not just to contender status but to the presidency itself. I’m resistant to viewing things exclusively through a racial prism, but here I wonder.

Part of me pines for an alternate timestream in which Jackson and Reagan go head-to-head in the 1984 contest. But although I am a time-traveler, I remain behind the glass, unable to influence events. At least for the moment.

January 8, 1984 / January 8, 2020

Yesterday (in the 1984 timestream) on American Top 40, guest-host Charlie Van Dyke intoned over a funk-and-calypso beat, “The first pop hit from a woman calling herself Madonna. It spent five weeks at #1 on the dance chart. This is ‘Holiday.’” So here we are. If Reagan represents the last assertion of an earlier, more traditional generation, Madonna represents the opposite: brashness, defiance, openly flaunted sexuality, a collision of glam and New York City seediness (though Madonna herself is a Michigan transplant). Although her music is pure pop, her roots in the NYC arts scene show through.

And yet she shares with Reagan an optimism—a brightness.

The song is distinguished by an instantly infectious melody and beat. The lyrics (written by Curtis Hudson) are vapid:

If we take a holiday

A time to celebrate

Just one day out of life

It would be so nice

And yet, in the context of the ongoing brinkmanship in US / Soviet relations, the lines “It’s time for the good times / Forget about the bad times” take on added poignance. I’ve understood for years that geopolitical worries served as the impetus for the “escapist” entertainment of the 1980s, but now, hearing and seeing that entertainment unfold day-by-day while taking in the news events, I’m grasping its necessity viscerally.

At age 9, I didn’t know quite what to do with Madonna. I shunned girls generally and was deeply confused by the dawning awareness of something called sex. But I remember that my friend Eddie fell hard for Madonna, and at a birthday party (probably his 10th), he had a cassette copy of Like a Virgin displayed proudly near his family stereo. I was outwardly indifferent and inwardly attracted.

The biggest surprise of the January 7 “American Top 40” is how many of the songs are still with us. In a certain sense my excavation project may be redundant because even Peter Schilling’s “Major Tom” has been making the rounds lately on ‘80s-themed radio stations.

My generation has done with the 1980s what my parents’ generation did with the 1960s. We are all still living in the decade, apparently, and that phenomenon is only intensifying, spurred on by the ‘80s-themed TV series Stranger Things and the forthcoming American Horror Story: 1984, Wonder Woman: 1984, and the new Ghostbusters movie (also set in 1984). I’m just being more systematic about it.

There are only a handful of songs from the Top 40 broadcast that have fallen out of 21st century circulation. Does anyone remember Spandau Ballet’s second hit after “True”? Me neither. How about Ray Parker Jr’s pre-“Ghostbuster’s anthem of desperation, longing, and toxic romance, “I Still Can’t Get Over Loving You”? It’s a poignant turn from an artist I’ve been only vaguely familiar with, but the last line—"Don't you ever try to leave, no / It'll be the last thing you'll ever do” makes me wonder if Ray is intentionally narrating the ramp-up to a homicide, or if this was just the tone of relationships in the 1980s. Since he references “Every Breath You Take,” we can surmise that the creepiness is intentional, but I can already tell that the accepted parameters of behavior within romantic relationships are a little different in 1984.

Other songs that have since fallen off the radar: Barry Manilow’s “Read ‘Em and Weep,” (a good song, written by Jim Steinman) and Christopher Cross’s “Think of Laura” (used as a recurring musical motif in General Hospital).

We also get a foretaste of the coming Phil Collins deluge. Though most listeners wouldn’t have caught it, Collins’s nimble drumming drives Robert Plant’s “In the Mood,” this week’s #40. Higher up the chart is Genesis’s acerbic and Beatles-inflected “That’s All.” Oh ye unsuspecting listeners of the 1980s, if you only knew what was coming….!

And sitting on top like a colossus: Paul McCartney and Michael Jackson’s “Say Say Say.” What a monster hit, and a symbolic generational summit—an acknowledgment that the youngsters can have their decade, but they’ll have to share it with the boomers. Because let’s not forget, the mid-to-late ‘80s will also be an era of intense Beatles nostalgia: a market saturation that will have huge implications for my own creative development.

Bonus: 3 Hours of MTV from January 8, 1984 (Internet Archive)

January 15, 1984 / January 15, 2020

In this week’s episode of Magnum P.I., “Jororo Farewell,” there is a scene in which the sheltered young prince, who is hiding out in Magnum’s pad, says something to the effect of, “Well, I could just run away and go to Haight Ashbury and become a hippie.” Magnum stares at the kid in astonishment. “Where do you get this stuff from?” It emerges that the prince’s entire understanding of the world comes from watching TV reruns.

Was the hippie scene in Haight Ashbury at a low ebb in the 1980s, to the extent that Magnum finds the idea archaic and exotic? That was my takeaway from the episode. A new era was underway.

And was the ‘80s the last decade to have its own distinct culture and fashion sensibility that wasn’t based entirely around recycling the past? Possibly. An argument could be made that the 1990s—at least the first half—were also culturally distinct. But nostalgia was already being pushed hard in the 1980s. An upcoming issue of Rolling Stone will feature the 1964-era Beatles on the cover. M*A*S*H* ended in 1983 with the most watched series finale in history. Happy Days wraps in May ’84. And the emerging media of cable television will create a boom for older programming. Someone has already figured out that the past can be monetized. And with Reagan in the White House and Jane Wyman ensconced at Falcon Crest, the Golden Age of Hollywood is ever present.

In the coming decades, emerging technologies will feed this appetite for nostalgia-on-demand. And they have proven effective enough that they have become the means of my time travel. A person living in 1984 would have had a much harder time attempting to travel back to the 1950s. (Unless you were Marty McFly in the forthcoming Back to the Future).

And yet, there remains a veil between me and the time and place I am attempting to access. Part of this is due to the noise of the present pushing its way into my consciousness. What has been entirely unexpected is the through-line from the “current” events of 1984 to the events of today. In both 1984 and 2020, Syria is led by an Assad. Then and now, Canada has a Trudeau in charge. Then and now, tensions simmer with Iran. And in both eras our great adversary is Russia—though in 1984 it is a segment of the political left who view the threat as overblown; in 2020 that contingent is on the right.

As I mentioned before, I am discovering that the 1980s were a bit scarier than I remembered—which makes me slightly more at ease with the 2020s. And yet, as my friend Jeff Rogers points out, “Those nuclear weapons are still out there, and they are in shakier hands. Is anyone happy about Pakistan having a nuclear weapon?”

You know the good ole days weren’t always good

And tomorrow ain’t as bad as it seems

-Billy Joel, “Keeping the Faith”

Some of my inability to fully break through to 1984 stems from the fact that I have been there all along. I believe I noted elsewhere that I had already heard many of the songs from the 1984 top 40 within the last 12 months on classic rock/pop radio. Still, it’s worth noting that I find 2/3 of that top 40 countdown to contain superb examples of songcraft: Paul McCartney and Michael Jackson, Hall and Oates, Madonna, Robert Plant, Genesis, Billy Joel (two hits on the cart), and one-hit-wonders like Matthew Wilder’s “Break my Stride” and Peter Schilling’s “Major Tom.” Can I say this about any era since then? (With the caveat that there is not really a universally acknowledged top 40 at present).

January 20, 1984 / January 20, 2020

There is an intriguing idea running through the 2000s iteration of Doctor Who: Certain larger historical events are fixed and can’t be altered without doing serious damage to the fabric of time. This mandate seems to include events in one’s own past, though the series has played fast and loose with that aspect on occasion. On the flipside, a lot of history is changeable, and it is in these malleable corridors of the timestream that most of the stories take place. (It would be convenient if the Doctor had an “off-limits” app that he could provide to his companions to steer them clear of violating one of the Great Laws of Time, but I guess that would deflate some of the series’ dramatic tension).

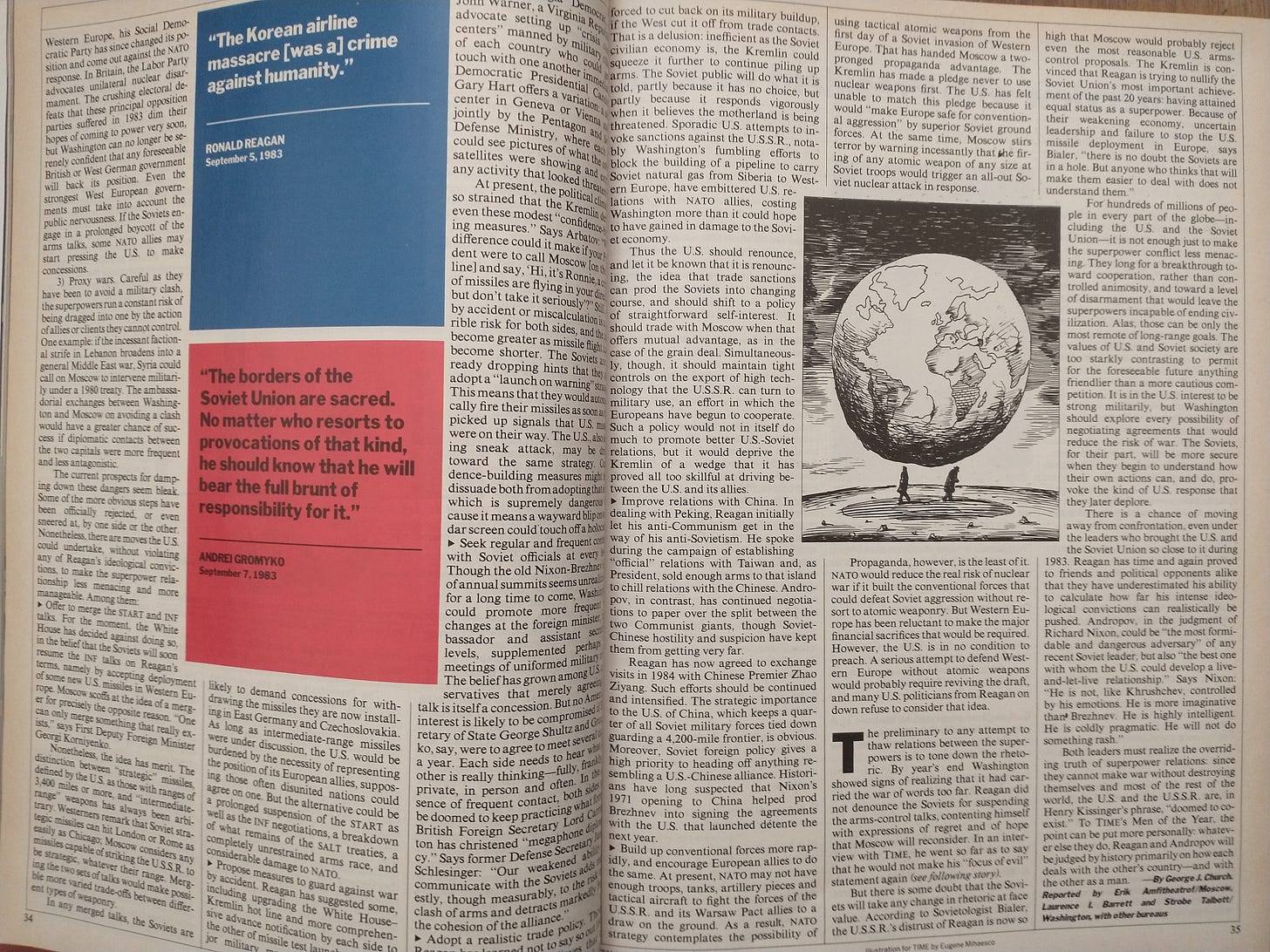

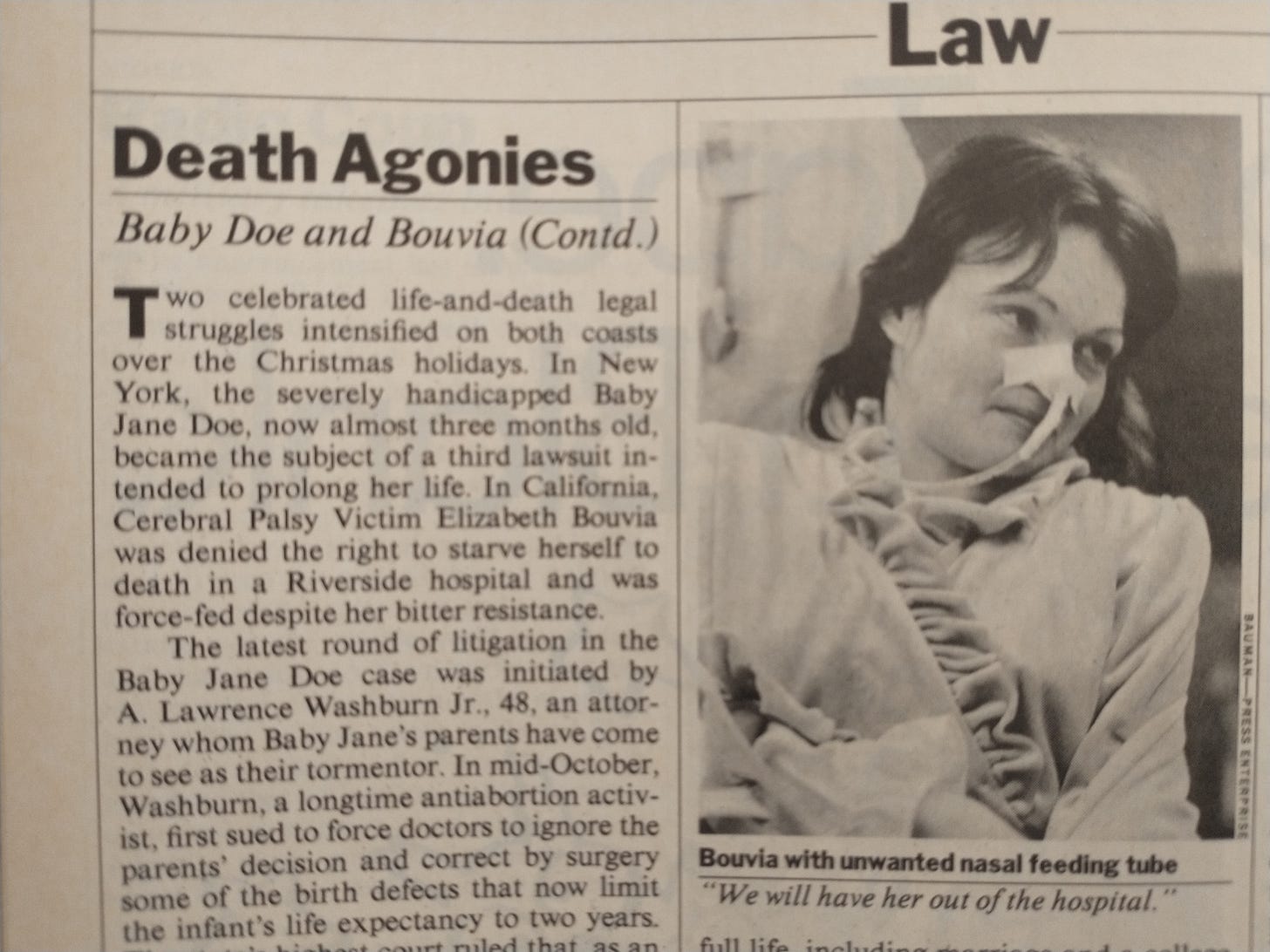

My journey through 1984 carries a similar feeling of malleability. I know how the broad events will play out—who will prevail in the presidential election, for example, and what will happen with the on-again, off-again US-Soviet disarmament negotiations. But many of the other events, because they are brand new to me, give the impression of being in flux—as if I could somehow intervene and change their course. Take the case of Elizabeth Bouvia, a severely disabled 27-year-old woman who, in January, is waging what seems to be a losing battle with the courts over her right to die. The main sticking point is that her disability makes it nearly impossible for her to end her life on her own, but if anyone else—hospital staff, friends, or family—were to assist by, say, removing her feeding tube, they would become legally complicit in her death. I know that the debate over assisted suicide will rage on, with different US states coming down on opposing sides of the question. But I don’t know Ms. Bouvia’s fate. It is in flux.

On a lighter note, I also don’t know who is going to win the 1984 Superbowl. Newsweek’s correspondent favors the LA Raiders and all-but-likens them to a barbarian horde in their uncouth fury. But I don’t know, maybe the Washington Redskins will pull it off. In the meantime, will Yes’s “Owner of a Lonely Heart” nab the top spot on American Top 40?

Of course, this is an illusion. I know these bound editions of Time magazine contain the complete arc of Bouvia’s story as well as the name of the football team that will triumph next Sunday (or Wednesday, if we’re going by the 2020 calendar). But I like to imagine that the contents of the upcoming issues are still arranging themselves in place. Until I read them (or hear the radio news broadcasts or watch the YouTube clips) the words flow about, unfixed, like hot lava. It is only as I get closer to them that they begin to cool and set into their permanent form. Such is the magic of time travel—or, more accurately, of a sheltered childhood.

January 21, 1984 / January 21, 2020

Some more words about Elizabeth Bouvia, because her situation has gotten under my skin. In the lone photo I have seen of her in Time magazine, she looks beautiful—serene, even—with piercing, luminous eyes. This all comes through despite the incongruity of an unwanted feeding tube jammed into her nose. An extraordinary woman who transcended her physical limitations to earn a college degree and, at one point, get married. Now she wants to die, but as with everything else in her life, she needs help. And here I am caught up in this “ongoing” story that reached its resolution, one way or another—36 years ago. (2024 Editor’s Note: Wikipedia indicates she is still alive). And yet, it’s also happening now, across the various media I am consuming in more-or-less real time. I am conflicted about her situation: grief that someone who once found so much meaning in life would now want to end it, coupled with the suspicion that I might feel the same way if I were in her shoes. The anti-authoritarian streak embedded in my DNA resents the government getting involved in her situation, but that is offset by the awareness that Bouvia forced the government’s hand by staging her showdown in a hospital. If I were her parent or loved one, I would fiercely defend her right to autonomy. But could I bring myself to assist with her wish to end her life by starvation? No, that’s something I could not do, and it’s imposing a terrible burden on the hospital as well. My heart breaks for everyone involved.

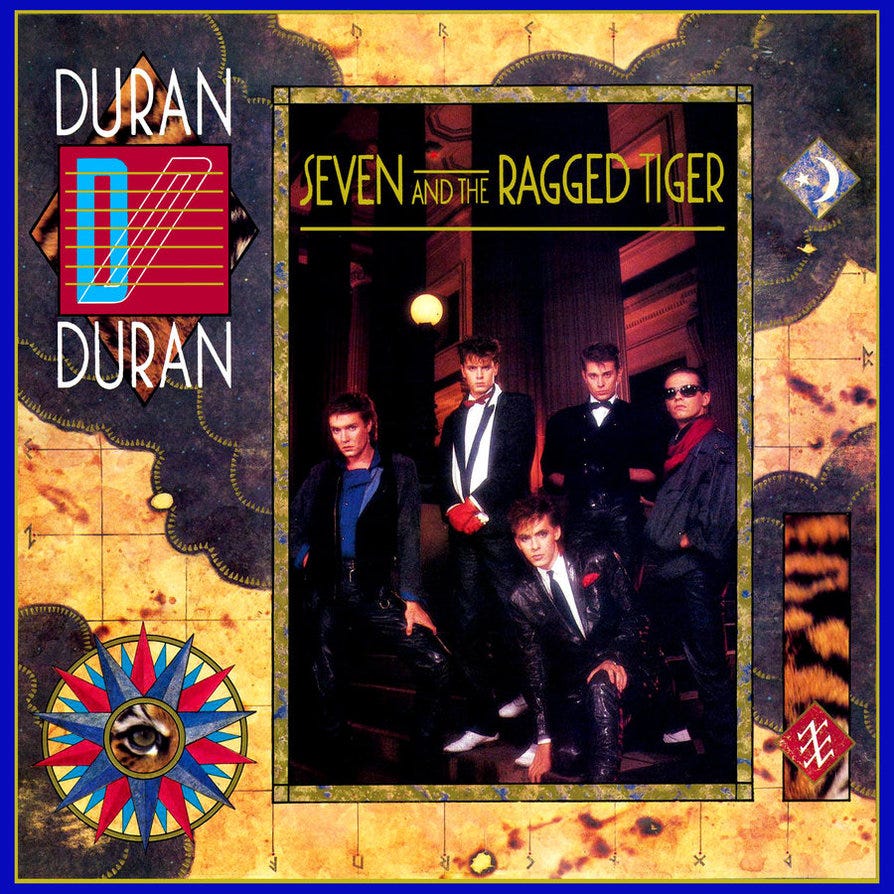

Now, how do I segue from this wrenching situation over to what I truly want to write about today: pop music? Specifically, Duran Duran? There’s no seamless way to do it but I will anyway. The “Fab Five” are flying up the charts with their “recent” album Seven and the Ragged Tiger. And now I can come clean and admit that I have always loved this band. Always—from the very first time I heard them. (Or, rather, saw them on MTV at my grandfather’s house). I love their command of pop hooks (especially choruses), the range-pushing attack of Simon Le Bon’s voice, the brittle funk of the rhythm section, the melodic synth work. I even love their look: stylishly turned-out in a way you rarely see in rock music—with the notable exceptions of Robert Palmer, David Bowie, and Brian Ferry of Roxy Music (perhaps the band’s most obvious influence). Their songs are catchy and weird. I don’t know what many of them mean, but their energy has not diminished in the succeeding decades. Now, I have heard the critique that their 1980s music was pitched primarily at preteens (an idea I would contest) but I was a preteen then, and I needed something to listen to. And why not this image-savvy pop band that was conversant with Eno and Bowie’s “Berlin Trilogy”? In the 21st century, all the kids get is Kidz Bop. Take your pick.

A lot of people that I admire loathe this band, and I have gamely played along with that condescension most of my life. Hell, even when we were all barely into our teens, my friends (and, I suspect, I as well) were talking about Duran Duran dismissively, as something we had once foolishly liked as children but had grown out of. Well, fuck that. I’m a fan. Count me, at middle age, as a proud, flag-waving “Durannie.”

An aggressive voice among the haters in 1984 is Rolling Stone’s Christopher Connelly (who will go on to edit Premier magazine and become an amiable fixture on MTV), who vents his distaste in the Jan. 19 issue in a review subtitled “They call this art?” He refers to the band as “dance-oriented wimpoids,” and, in what I suspect will be a familiar refrain of critics in this era, tars and feathers the album’s lyrical themes as “Reaganesque.”

Reading the review, I think, on the one hand, It’s nice to see some bite from Chris! But mostly I grieve for the nasty apprenticeship phase many of us music writers feel we must put ourselves through: that period when we are finding our voices while still in thrall to rhetorical pitbulls like Lester Bangs and Hunter S. Thompson (not a music writer himself but certainly a figure who looms large in the stylistic formation of many music scribes). This is not a takedown of Connelly—my own offenses in this regard have been, I suspect, more unfair than anything he wrote. And certainly, when a critic comes across a piece of music that is soul-crushingly awful, he is duty-bound to report that. But there is a curious undertone to Connelly piece: One gets the sense that he likes some of the music but feels obligated to join in the pile-up. He admits that “Union of the Snake” is “an A-1 single, a charging, foot-stomping romp with a suitably estranged sax break.” Elsewhere he notes that the band have recorded some “moderately enthralling pop singles.” Admit it, Chris! You’re shaking your ass to this stuff! If I may quote another critically unfashionable artist having a moment in January 1984: “Be yourself! Give your free will a chance!”

January 27, 1984 / January 27, 2020

I am largely deficient in the sports gene (or at least the team sports gene; solo competition in the Olympics is a different matter) so I don’t have much to say about last week’s (in 1984) Superbowl contest between the Washington Redskins and the Los Angeles Raiders. I can’t even tell you who won, though the answer is a quick Google search away.

What’s more interesting to me is Ridley Scott’s lavish, Orwell-themed commercial for the newly unveiled Apple Macintosh. Aired just this once and never seen again—until the era of YouTube. Here, Scott combines the dark claustrophobia of his Blade Runner world with Orwell’s vision: We have a chamber filled with Zombie-like figures staring at a flickering screen in which the bespectacled Big Brother hammers home messages of conformity, groupthink, strength in numbers. “We are one people, one will, one resolve, one cause,” he chants in Hitleresque cadences. “We shall prevail!” Intercut with this frightening scene is a lone female runner—blonde, athletic, determined; a burst of color amidst the surrounding grey—carrying a hammer while pursued by guards clad in riot gear. Before they can get to her, she hurls the hammer at the face on the screen. Big Brother explodes in a flash of blinding light.

“On January 24th,” a narrator intones, “Apple Computer will introduce Macintosh. And you’ll see why 1984 won’t be like 1984.” Cue to the Apple logo. Then fade to black.

First thought: What a heavy-handed way to flog a new desktop PC! But also: What a brilliant move. Steve Jobs is not off-base in casting his new device as revolutionary, though its legacy—or rather, the legacy of the long chain of devices and behaviors that will proceed from it—will prove a bit more complicated. Will it become the great liberator of hearts and minds that Jobs imagines? Perhaps for a time. But Big Brother has a knack for rebounding, recalibrating, and reasserting his presence. Perhaps because he is us.

I was discussing this commercial with a tech-industry colleague a couple days ago (in 2020), and he pointed out that in the context of the computer industry of 1984, the hive mind depicted in the ad was meant to represent the dominant paradigm of the single, massive mainframe computer with multiple terminals connecting back to it. The compact Macintosh, by contrast, represented independence, freedom, a stab at the heart of the conformist monolith. It was separate, walled off from the hive. My colleague noted wryly that we have now swung back to that original paradigm—with Apple devices leading the charge. Only now the mainframe is much bigger and much less tangible. It’s “in the cloud.” It’s everywhere.

But isn’t this the fate of all revolutions? “Meet the new boss / Same as the old boss.” Only—I’ve got to say, the Macintosh is really freakin’ cool! An older, pre-computer generation might look at the small, boxy contraption and say, “That’s it?” But that’s precisely the point: Its small, unassuming appearance is in fact its most impressive detail. It places on a desktop what, in living memory, used to fill an entire room—or building. Steve Jobs will spend much of the rest of his life repeating this magic trick.

My 1980s neighbor Paul D’Andrea, a playwright, was an early adopter of this device. A very early adopter—like, he may have had one by February ‘84. I remember the allure his writing office took on after the Mac appeared—this small, glowing, softly whirring box sitting atop his clutter-free desk in place of a typewriter. It cast a stillness over the room, interrupted only by the clattering of D’Andrea’s dot-matrix printer as it spewed out the results of his collaboration with the device.

Of course, Paul’s sons and I were more interested in the games that came pre-loaded on the accompanying floppy discs. My favorite was a combined flight and aerial combat simulator. Visually primitive, but immersive, its graphics looked exactly like those of the full-size simulators we had seen at the science museum and at my dad’s flight school. Eventually—though I don’t recall if it was 1984 or later—Paul purchased a modem, which enabled the owner to play this simulator-combat game with other unseen users in real time. Truly the stuff of sci-fi fantasies.

So yes, in 1984 there is a tech revolution underway. Conveniently, it will make a lot of money for Mr. Jobs and his colleagues. But I have a hard time faulting the man. After taking in the commercial, I watched footage of the very young, floppy-haired, bow-tie-sporting Steve Jobs unveiling the Mac to an auditorium full of his Apple colleagues. The excitement of the audience was electric, and despite the barriers imposed by the grainy video and the ludicrously humble appearance of that small beige box with its tiny screen and floppy-disc slot, I felt myself swept up in the moment. What a triumph—a flash point akin to the hand of man touching the hand of God on the ceiling of the Sistine Chapel. I watched the usually self-assured Steve Jobs tear up, overwhelmed by the weight of this moment. I teared up too.

January 28, 1984 / January 28, 2020

Ain’t nothin’ gonna break my stride

Nobody gonna slow me down

Oh no

I got to keep on movin’

This week in 1984, the song “Break my Stride” hits number 5 in the Billboard Hot 100 chart. It is, to my ears, one of the great songs of the ‘80s: a powerhouse pop earworm that I’ve probably heard on the radio several times a year, every year, since it debuted. It’s astonishing, in retrospect, that it didn’t reach #1 and stay there for weeks. It’s also astonishing that even though “Break My Stride” has remained a constant fixture, I had never, until now, learned the name of the song’s composer and singer, Matthew Wilder. (During the file-sharing era, I remember an MP3 of the song floating around mistakenly attributed to Matthew Sweet.) I had vaguely assumed that the song was by the singer of Supertramp, but no, it’s from Wilder’s debut album, I Don’t Speak the Language. And that album, I’ve just discovered, is a treasure that has been hiding in plain sight. Kicking off with “Break My Stride,” it rolls on to “The Kid’s American”—another great pop song—before delivering a knockout blow in the title track: a hypnotic meditation on the life of post-impressionist painter Paul Gaugin. The rest of the album is nearly as good. Who are you, Matthew Wilder? Where have you been my whole life? And where did you go?

“Break My Stride” was clearly a phenomenon of the pre-Internet era, and in fact it stands as the line of demarcation between the pure-radio and video eras. There was no formal video made for the song. Wilder did mime the song for a television appearance, which is what comes up now if you search YouTube for it, and he did follow his song’s sudden success with a rudimentary video for “The Kid’s American” and a more sophisticated, albeit awkward, video for “Bouncin’ off the Walls” (the title track from a quickly released follow-up LP). I can find very little online about Wilder’s pre-1984 background beyond some basic info on Wikipedia: His birth name is Weiner; he was one half of the folk duo Matthew and Peter in the early ‘70s. Then… nothing until the mega success of that first single. As far as I can tell, he wasn’t covered much by the press even at the height of the song’s chart dominance. Again, emblematic of the times. Can we imagine someone having a hit this big in the current era without the Internet (and especially social media) all over him or her?

As to the question of what happened to him, there are two answers. Matthew Wilder the pop star went nowhere. He never released a third album. This is pure conjecture, but by the time of the “Bouncin’ of the Walls” video, I perceive terror on his face—the look, possibly, of a success-hungry young man watching his chances slip away. In any case, the stabs at a visual persona did not help his career. In the late 1983-early 1984 footage he looks like Weird Al Yankovic’s dodgy older brother. By year’s end he is clean-shaven, has a bleached perm and looks as if he’s going trick-or-treating as Brian May of Queen. Neither of these is a winning frontman look.

But there is a fascinating second act to the Matthew Wilder story. He did not disappear. Not really. He just changed lanes. In fact, his talent and productivity grew as he settled into a successful career as a songwriter and producer for other artists. He produced No Doubt’s breakthrough album Tragic Kingdom; he wrote songs for Miley Cyrus, Kelly Clarkson, Selena Gomez, and Christina Aguilera. He wrote music and voiced one of the major characters for the hit Disney movie Mulan. It’s not an exaggeration to say that his fingerprints are all over modern popular music. And yet, again, where are the Billboard profiles and the Slate/Vanity Fair/Buzzfeed pieces? He remains, as before, hidden in plain sight. (He does have a Twitter feed though. And something is stirring—perhaps some sort of bleed-through between the two timestreams: As of this writing, “Break My Stride” has become a 2020 TikTok meme. I don’t know what that is.)

(2024 Editor’s note: Just this month, Matthew Wilder posted his documentary The Strangest Dream—which tells his entire story—to YouTube. It’s mesmerizing.)

January 29, 1984 / January 29, 2020

A couple nights ago (in 1984), Reagan gave his State of the Union address. Both his best and worst qualities were on display, but let’s start with the former. At about the ¾ mark, he looked straight at the Soviet ambassador, and said:

Tonight I want to speak to the people of the Soviet Union. To tell them it's true that our governments have had serious differences, but our sons and daughters have never fought each other in war, and if we Americans have our way, they never will. People of the Soviet Union, there is only one sane policy for your country and mine. To preserve our civilization in this modern age, a nuclear war cannot be won and must never be fought. The only value in our two nations possessing nuclear weapons is to make sure they will never be used. But then, would it not be better to do away with them entirely?

People of the Soviet..., President Dwight Eisenhower, who fought by your side in World War II, said the essential struggle is not merely man against man, or nation against nation, it is man against war. Americans are people of peace. If your government wants peace, there will be peace. We can come together in faith and friendship to build a safer and far better world for our children, and our children's children, and the whole world will rejoice. That is my message to you.

This plea, delivered with emotion and sincerity, seemed pretty far afield from the wishes of his more hawkish colleagues and supporters, or from some of his own earlier rhetoric. To his critics, his words must have appeared the height of hypocrisy given the Pershing missiles recently positioned all over Europe. Ah, but I have the time-traveler’s knowledge that he will make good on what he said regarding the relations between our two countries. (Less so on the bit about the complete eradication of nuclear weapons).

Then the bad… and he pivoted to this so quickly as to induce whiplash: In virtually the same breath as saying we (the US) are never aggressors, he went on to laud the heroism of the Marines in the recently completed Grenada invasion. Not to take anything away from the Marines; they did indeed perform heroically. But in evoking what even some of Reagan’s sympathetic biographers concede was an elective—and aggressive—military operation so soon after extolling America’s non-interventionist disposition, he displayed the myopia that was his critics’ most powerful ammunition. There is the sense with Reagan and his administration that the right hand does not always know what the left hand is doing.

As I have written elsewhere, I am susceptible to the charms of this president. Something in the combination of his Golden Age of Hollywood glamour and my childhood associations with him makes me almost willing to follow him over the cliff. Almost. Stuff like this stops me.

Conspicuously absent from the State of the Union address is any mention of AIDS.

This I remember: By 1984, AIDS is in the public consciousness: a worry slowly ratcheting up; an ominous and bitterly ironic acronym (There is little aid available for the person who has received an AIDS diagnosis). And into the information vacuum creeps all of the usual suspects: fear, paranoia, tribalism, denial. The AIDS jokes induce a skin-crawly feeling even to a 9-year-old who barely understands anything about the world. Pre-Magic Johnson, the disease is a scarlet letter. If you’ve got it, you’ve been found out; you’ve likely done one of two things. (A small corner of public sympathy is reserved for children who have caught it from a blood transfusion). And fears of catching it from kissing or toilet seats persist.

We retreat into the cold calculation of “Better them than us.” On the Newsweek on Air radio program the reporter and researcher talk of the “comfort” in seeing that the disease remains largely confined to the high-risk groups of male homosexuals and intravenous drug users. This is both surprisingly honest and deeply insensitive. For who in the non-“high risk” population is not breathing a sigh of relief? We seem to be okay. We’re not at risk. But of course, there is zero comfort for a gay man in 1984, only terror and, I suspect, anger. Anger at the lack of information. Anger at the apparent lack of concern from those in power. Anger at the scapegoating. As Michael Stipe from R.E.M. will later say, “Suddenly fucking could kill you.”

But I must be careful here. I do not have the right to speak for either of the affected communities. In my 1990s future, Obsessive Compulsive Disorder will fuel worries of catching AIDS from a barber’s razor, unclean public spaces, or perceived lack of vigilance in my sex life. But part of me will always know this is far-fetched. I am one of the lucky ones; I remain on the lifeboat.

Why is Reagan not talking about AIDS? He is a broadly popular president, and a few words in the State of the Union urging compassion would go a long way. I feel acutely my inability to interfere with the time stream. I can argue with the screen, but I am arguing behind the glass that separates my time machine from the reality of 1984. Think of it this way: every night, if we look up into the sky, we see stars as they existed hundreds of thousands, or millions, or billions of years ago. Because of the speed at which light travels, it all seems in the present to us, but some of those stars may have been snuffed out by now. In any case we can’t do anything to impact the shifting course of the heavens we see before our eyes. All we can do is watch. We are here. They are there. The streams run parallel. But also: one ran before, one runs now.

Next up: Part 2